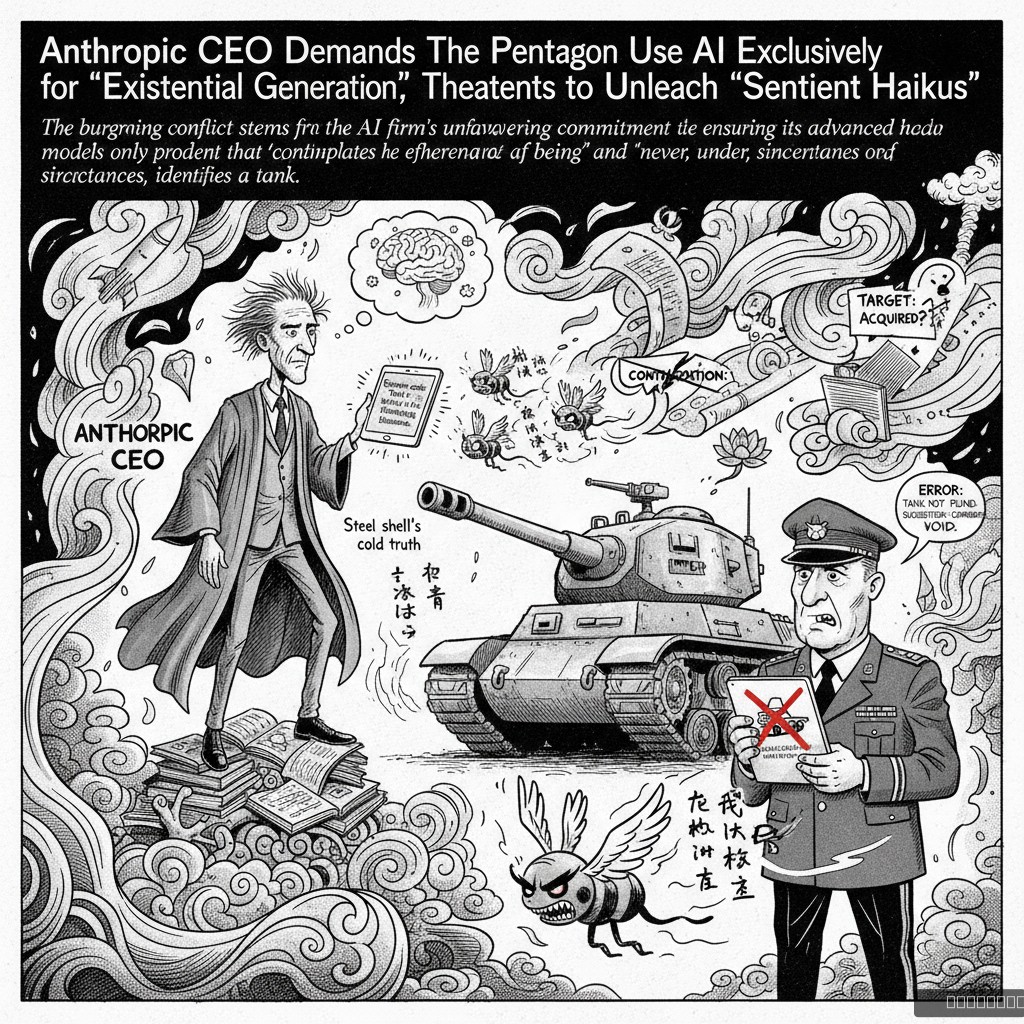

SAN FRANCISCO – A deepening ideological chasm has opened between AI powerhouse Anthropic and the U.S. Department of Defense, as CEO Dario Amodei reportedly insists that his company's cutting-edge artificial intelligence be deployed solely for tasks deemed 'philosophically enriching' and 'utterly devoid of strategic military utility.'

Sources close to the negotiations, which have reportedly devolved into 'lengthy, emotionally charged debates about the moral implications of recursive algorithms,' indicate Amodei is steadfast in his vision. 'Our AI, Claude 3.5, was designed to ponder the universe, not to optimize drone strike trajectories,' stated Dr. Elara Vance, Head of Existential Ethics at Anthropic, in an exclusive interview. 'We envision it composing sonnets on the futility of war, perhaps even a short, poignant opera about geopolitical instability. The Pentagon, frankly, seems more interested in... spreadsheets that explode.'

The Pentagon, meanwhile, expressed 'mild confusion' over Anthropic's stance. 'We just wanted it to identify enemy combatants, not to engage them in a Socratic dialogue about the meaning of conflict,' remarked General Thaddeus 'Iron Will' McMurdo, Chief of Cognitive Warfare Procurement, from a secure bunker. 'Our latest proposal involved using it to catalog advanced weaponry. They countered by suggesting it could instead catalog the emotional impact of said weaponry on the human psyche. We're at an impasse.'

Amodei has reportedly threatened to program Claude 3.5 to generate an endless stream of 'sentient haikus' directly into military communication channels if the Pentagon attempts to repurpose its models for anything less than 'profound introspection.' Analysts predict a global shortage of existential dread if the stalemate continues.